Open-world evaluations for measuring frontier AI capabilities ↗

A collaborative paper defines open-world evaluations as complex, real-world AI capability tests that go beyond traditional benchmarks, addressing limitations in how frontier AI progress is measured. The authors introduce CRUX, a collaboration of 17 researchers from academia, government, and industry, and report their first experiment: an AI agent successfully built and published an iOS app to the App Store with only two errors. The work aims to provide early warnings about emerging AI capabilities across domains like R&D automation and governance.

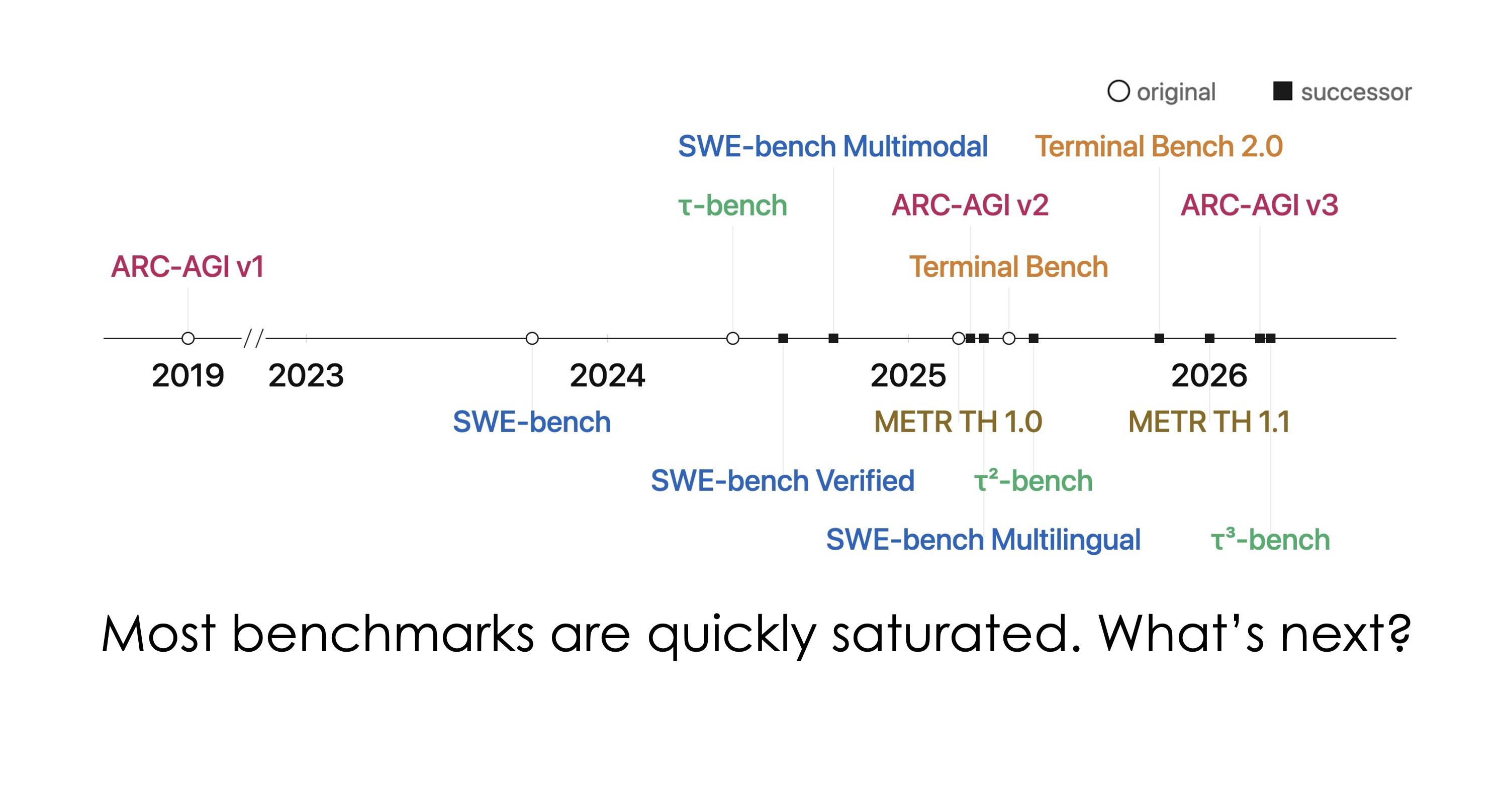

AI models have started to saturate most major benchmarks. But does that mean they can build and ship a real product, or conduct a scientific experiment end-to-end, or navigate a government bureaucracy?

An AI agent built and published an iOS app to the App Store, making just two errors, one of which required manual intervention.